DoubleQuoting Andreessen with Turing

[ by Charles Cameron — counterintuitive insights are like eddies in group mind ]

.

**

Adam Elkus said a while back that he wondered “if @pmarca and @hipbonegamer could team up for a double quote post.”

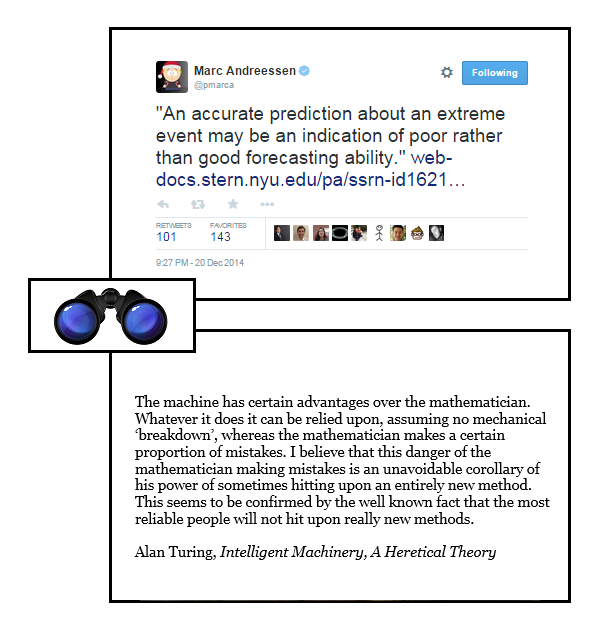

Well, I’m @hipbonegamer, and @pmarca is Marc Andreessen — and while we haven’t teamed up as such, the DoubleQuote above consists of a tweet from Marc two or three days ago, and a paragraph I ran across yesterday which seemed to echo Marc’s tweet from one of Alan Turing‘s posthumously published essays, and which is juxtaposed with Marc’s tweet as my response. Effectively, Marc made the first move on this two panel board, and I responded with the second — and that’s how this most basic form of my HipBone Games is played.

The degree of kinship between Marc’s tweet and Turing’s para is even stronger if you look up the link Marc offered in his tweet, which goes to a pre-pub paper by Jerker Denrell and Christina Fang titled Predicting the Next Big Thing: Success as a Signal of Poor Judgment, in which they suggest:

The explanation is that because extreme outcomes are very rare, managers who take into account all the available information are less likely to make such extreme predictions, whereas those who rely on heuristics and intuition are more likely to make extreme predictions. As such, if the outcome was in fact extreme, an individual who predicts accurately an extreme event is likely to be someone who relies on intuition, rather than someone who takes into account all available information. She is likely to be someone who raves about any new idea or product. However, such heuristics are unlikely to produce consistent success over a wide range of forecasts. Therefore, accurate predictions of an extreme event are likely to be an indication of poor overall forecasting ability, when judgment or forecasting ability is defined as the average level of forecast accuracy over a wide range of forecasts.

— and then goes on to demonstrate it:

Consistent with our model, both the experimental and field results demonstrate that in a dataset containing all predictions, an accurate prediction is an indication of good forecasting ability (i.e., high accuracy on all predictions). However, if we only consider extreme predictions, then an accurate prediction is in fact associated with poor forecasting ability.

**

The counterintuitive nature of this prediction is delighful in its own right — there’s a sense in which “going against the tide” of what appears obvious is part of a wider pattern that includes knots in a plank and eddies in a stream, close cousins to von Kármán’s vortex streets. And I suspect it’s that built-in paradox that we perceive as “counterintuitive” that caught the eye and attention of Turing, Denrell and Fang, Marc Andreessen and myself. Once again, form, ie pattern, is the indicator of interest.

So this DQ is for Marc and Adam, raising a toast to Alan Turing, in playful spirit and with season’s greetings.

December 23rd, 2014 at 7:33 pm

It sounds like Prospect Theory

http://en.wikipedia.org/wiki/Prospect_theory

People overweight low probability events and underweight high probability events.

Of course, if hitting big on an extreme event can be explained as actually a mistake, then thank God for mistakes.

December 24th, 2014 at 4:30 am

Hi Grurray:

.

Same ballpark, different game I think. Propsect theory tells us which ways we tend to misjudge, while Turing et al are telling us what happens when we overvalue the general judgmental skills of those who make one unlikely but correct prediction.

.

I suspect there are cases where an individual makes a correct prediction about what looks to others like a “low probability event” because they have an angle of vision that shows them some detail they can contextualize, that others miss.